Superhuman Data

for Finance

The reasoning layer for long-horizon finance agents.

We capture tacit human reasoning for open-ended tasks, starting with finance.

Building AI for open-ended domains requires higher-quality training data

Reasoning traces captured in real time, structured for model training.

Existing data providers ask experts to catalogue their reasoning retrospectively. The result is a reconstruction, shaped by hindsight, narrative instinct, and the impulse to sound coherent. Engram captures reasoning as it happens.

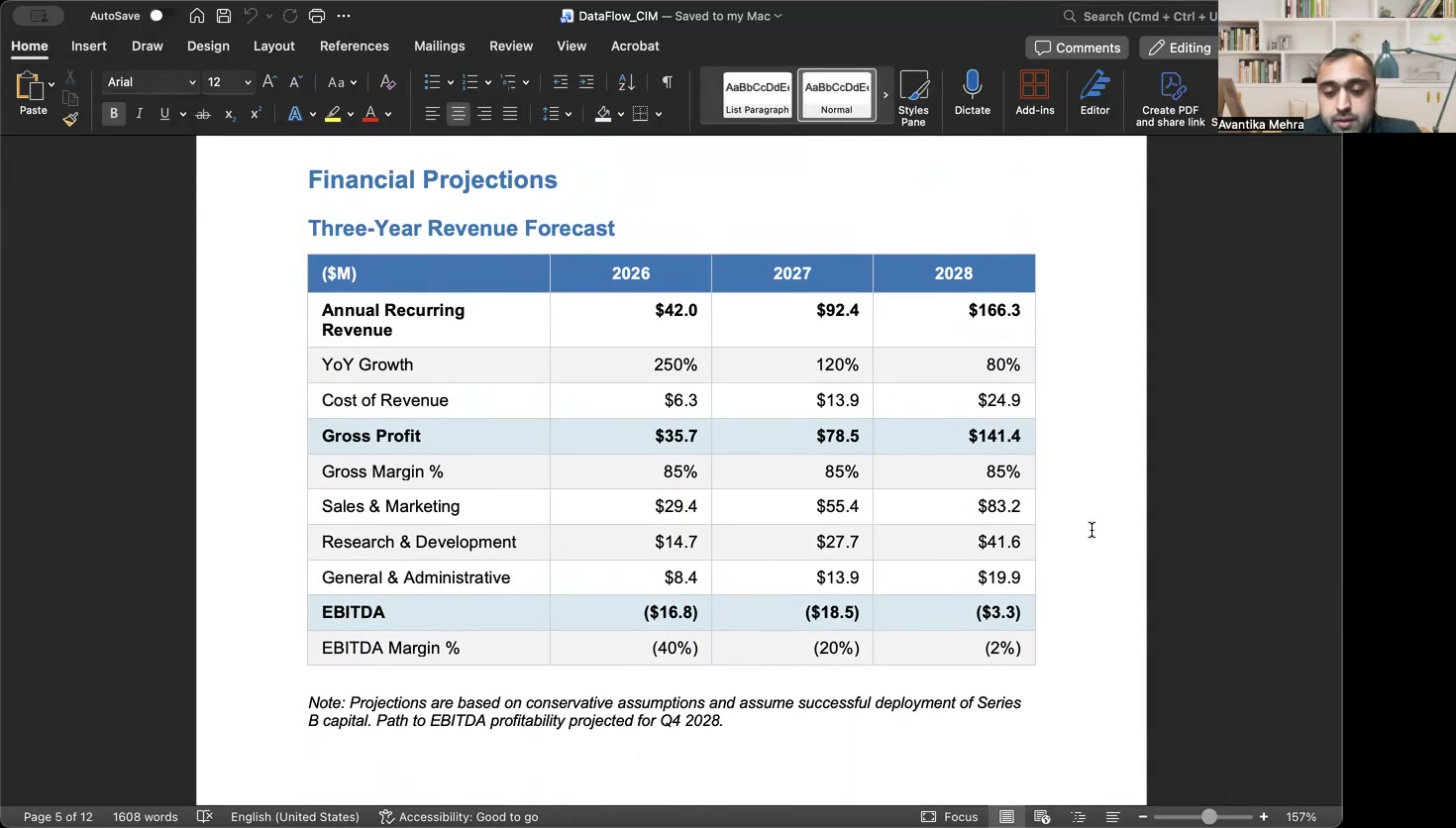

{

"event_type": "CONCERN",

"start_ts": 1421.3,

"end_ts": 1438.7,

"transcript": "Wait — the EBITDA margin assumption doesn't hold if you back out the one-time items...",

"confidence": 0.94,

"pause_delay": 4.2,

"screen_state": "event_1421.300.jpg",

"revision_triggered": true,

"label": "EBITDA margin challenge"

}Live expert reasoning and on-screen behavior is captured to generate fine-tuning training bundles

A recorded expert session is decomposed into structured cognitive events — timestamped, cross-referenced against screen behavior, and validated against behavioral signals. Two outputs: explainability dashboards for human review, and SFT bundles, including preference signals, for post-training.

Codifying wisdom into reasoning trace packages provides three compounding advantages.

Each session produces three interlocking training signals: event classification (per-event cognitive typing), reasoning chains (multi-step logic reconstruction), and session synthesis (full-session analysis). The deliberative structure — hesitation, revision, hypothesis rejection — is encoded directly in the trace.

Every training example embeds the screenshot the expert was looking at when they spoke — base64-encoded inline via VISION format. The model learns situated reasoning: cognition grounded against the specific financial data on screen. Voice cross-references visual context, making the data self-verifying by design.

Each session refines the reasoning format itself — accumulating branching patterns, edge-case heuristics, and decision-point taxonomies that later sessions build upon. Session 200 is structurally richer than session 10. The corpus compounds in depth, not just volume.

Cement your legacy into an intellectual endowment

Existing compensation architecture for AI training data decouples expert contribution from downstream value creation. In the classic gig work model, an expert's judgment compounds indefinitely inside the models it trains, while the expert captures none of that compounding value.

The heuristics refined across years of practice are an intellectual legacy. We're aiming to cement and preserve this.

Through participating in Engram, the knowledge of the industry's most capable practitioners is preserved in structured form, and returned to them, with every model that learns from it.

No residual claim.

For customers, pricing is straightforward. The royalty structure is how we compensate experts on our side and doesn't create downstream obligations for your team.

Interested in contributing?

Become an ExpertThe data layer powering AI in finance.

Built for any team whose competitive advantage depends on the quality of financial reasoning their models can produce.

Our team and advisors come from

Interested in Engram?

We're working with a select group of design partners. Schedule a conversation to learn more.